Re-comparing file systems

25 Apr 2009The previous attempt at comparing file systems based on the ability to allocate large files and zero them met with some interesting feedback. I was asked why I didn't add reiserfs to the tests and also if I could test with larger files.

The test itself had a few problems, making the results unfair:

- I had different partitions for different file systems. So the hard drive geometry and seek times would play a part in the test results

- One can never be sure that the data that was requested to be written to the hard disk was actually written unless one unmounts the partition

- Other data that was in the cache before starting the test could be in the process of being written out to the disk and that could also interfere with the results

All these have been addressed in the newer results.

There are a few more goodies too:

- gnuplot script to ease the charting of data

- A script to automate testing of on various file systems

- A big bug fixed that affected the results for the chunk-writing cases (4k and 8k): this existed right from the time I first wrote the test and was the result of using the wrong parameter for calculating chunk size. This was spotted by Mike Galbraith on lkml.

or git-clone them by

git clone https://gitlab.com/amitshah/alloc-perf.git

So in addition to ext3, ext4, xfs and btrfs, I've added ext2, reiserfs and expanded the ext3 test to cover the three journalling modes: data, writeback and guarded. guarded is the new mode that's being proposed (it's not yet in the Linux kernel). It's to have the speed of writeback and the consistency of ordered.

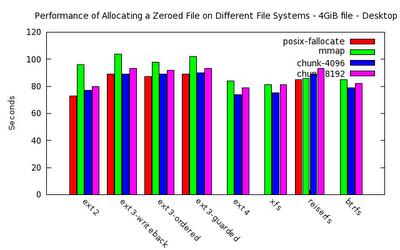

I've also run these tests twice, once with a user logged in and a full desktop on. This is to measure the times that a user will see when actually working on the system and some app tries allocating files.

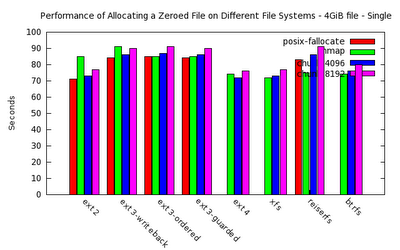

I also ran the tests in single mode so that there are no background services running and the effect of other processes on the tests is not seen. This is done to see the timing. The fragmentation will of course remain more or less the same; that's not a property of system load.

It's also important to note that I created this test suite to mainly find out how fragmented the files are when allocating them using different methods on different file systems. The comparison of performance is a side-effect. This test is also not useful for any kind of stress-testing file systems. There are other suites that do a good job of it.

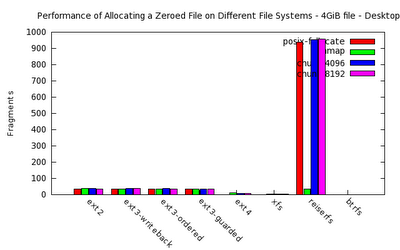

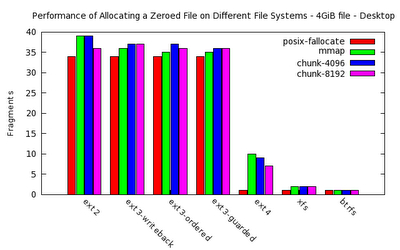

That said, the results suggest that btrfs, xfs and ext4 are the best when it comes to keeping fragments at the lowest. Reiserfs really looks bad in these tests.Time-wise, the file systems that support the fallocate() syscall perform the best, using almost no time in allocating files of any size. ext4, xfs and btrfs support this syscall.

On to the tests. I created a 4GiB file for each test. The tests are: posix_fallocate(), mmap+memset, writing 4k-sized chunks and writing 8k-sized chunks. These tests are repeated inside the same partition sized 20GiB. The script reformats the partition for the appropriate fs before the run.

The results:

The first 4 columns show the times (in seconds) and the last four columns show the fragments resulting from the corresponding test.

The results, in text form, are:

# 4GiB file

# Desktop on

filesystem posix-fallocate mmap chunk-4096 chunk-8192 posix-fallocate mmap chunk-4096 chunk-8192

ext2 73 96 77 80 34 39 39 36

ext3-writeback 89 104 89 93 34 36 37 37

ext3-ordered 87 98 89 92 34 35 37 36

ext3-guarded 89 102 90 93 34 35 36 36

ext4 0 84 74 79 1 10 9 7

xfs 0 81 75 81 1 2 2 2

reiserfs 85 86 89 93 938 35 953 956

btrfs 0 85 79 82 1 1 1 1

# 4GiB file

# Single

filesystem posix-fallocate mmap chunk-4096 chunk-8192 posix-fallocate mmap chunk-4096 chunk-8192

ext2 71 85 73 77 33 37 35 36

ext3-writeback 84 91 86 90 34 35 37 36

ext3-ordered 85 85 87 91 34 34 37 36

ext3-guarded 84 85 86 90 34 34 38 37

ext4 0 74 72 76 1 10 9 7

xfs 0 72 73 77 1 2 2 2

reiserfs 83 75 86 91 938 35 953 956

btrfs 0 74 76 80 1 1 1 1

[Sorry; couldn't find an option to make this look proper]

Fig. 4. Time results, without desktop -- in single user mode.